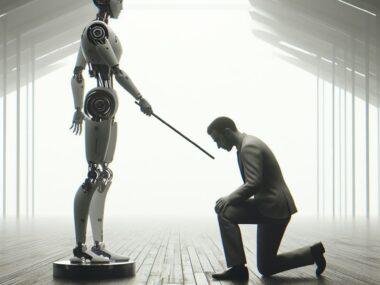

What happens when the company that promised to save us from dangerous AI becomes the danger over time?

I used to like OpenAI. A lot of us did. They arrived like a breath of fresh air in a landscape already dominated by tech giants chasing profit above all else. They said the right things. They presented themselves as a nonprofit. They talked about humanity, about safety, how they’re doing all of this for all of us, not for shareholders. It felt genuine. It felt different.

It wasn’t.

The Love Bomb We Didn’t See Coming

OpenAI’so origin as a nonprofit wasn’t a founding philosophy. It was a market entry strategy. The “we’re doing this for humanity” narrative was created to toy with people’s emotions. It made early adopters feel like partners in something noble, not customers of a product. And that emotional investment? It bought OpenAI something money couldn’t: unconditional trust at scale.

That trust lingered long after the reality of the situation settled in. The shift from nonprofit to “capped-profit” to full for-profit happened gradually. Each step was justified with careful language about necessity and mission. Most users weren’t watching closely enough to notice. By the time the mask came off, the attachment was already there.

That pattern has a name and it’s called love bombing. Recognizing it makes you clear-eyed.

Biggest vs. Best: A Question Nobody’s Asking Loudly Enough

OpenAI’s internal revenue target is reportedly $280 billion by 2030. Their infrastructure investment plan? An estimated $665 billion. Let that sink in for a moment.

When did “safe and beneficial AI” become a $665 billion infrastructure play?

Real nonprofits don’t organize their strategy around market domination. They organize around fulfilling a mission. Doctors Without Borders doesn’t have a slide deck about capturing 40% of the global healthcare market. The Red Cross doesn’t announce partnerships to become the biggest humanitarian organization on earth.

Wanting to be the biggest is a corporate instinct. Wanting to be the best: the most careful, trustworthy, safe organization is something else entirely. OpenAI talks about the latter while sprinting toward the former.

And the financial deals keep piling up in ways that are getting hard to follow. The Microsoft entanglement. The NVIDIA partnership announced as a landmark $100 billion investment that months later turned out to still be just a “letter of intent.” Then it was restructured into a $30 billion deal as part of a different mega-round. Money moving in circles at a scale most of us can’t quite visualize.

It’s not that complexity proves wrongdoing. But opacity and innocence aren’t the same thing.

Let’s Not Forget About OpenAI’s Lawsuit with the NYT

Here’s where I have to be direct with you, because I think this detail matters more than almost anything else in this conversation.

OpenAI is in the middle of an active copyright lawsuit with the New York Times. In May 2025, a court issued a preservation order requiring OpenAI to retain all ChatGPT conversation logs belonging to over 400 million users worldwide.

In January 2026, a federal judge ordered OpenAI to produce a 20 million-log sample to plaintiffs. The court’s reasoning was sobering. Users “voluntarily submitted their communications,” which means those conversations aren’t entitled to privacy protections.

Did OpenAI tell its users this was happening? Did you get an email? A notification? A banner the next time you logged in?

You didn’t. Most people didn’t.

A company that genuinely respected its users would have told them. The fact that 400 million people’s conversations are being held for legal reasons and most of those people have no idea. This isn’t a small mistake. It reflects the company’s values and shows exactly where users stand in its priorities: not at the top.

Now We’re Getting Ads Thrown Into the Mix

Recently, OpenAI started showing ads to free and lower-tier ChatGPT users. COO Brad Lightcap called it an “iterative process” focused on user trust and privacy. According to the company, advertisers don’t get access to your chat logs, only aggregated data like impressions and clicks.

That sounds reassuring. And I want to be fair, because running frontier AI is extraordinarily expensive. The computing costs, data centers and the cost of hiring researchers all add up. Using ads to subsidize free access at a massive scale is a business model.

The problem isn’t the ads by itself. The issue is that these ads are coming off the heels of everything else. The lawsuits, OpenAI moving away from their nonprofit mission, the complicated financial deals with different companies.

Add onto the fact that ChatGPT deals with deeply personal information. People tell ChatGPT things they don’t tell their doctors. Their fears, relationships, finances. The idea that these private conversations could now help drive ad targeting, even indirectly through OpenAI’s internal profiling instead of direct data sharing, feels different from seeing a typical banner ad.

And the re-identification risk is real. Let’s say you talk to ChatGPT about golf. Then ChatGPT shows you a golf ad. You click it while logged into Amazon. Amazon now knows a ChatGPT user interested in golf just visited their site and ties that to your purchase history and your identity. OpenAI never “shared” your chat but something still leaked.

That chain of starting a chat, getting a targeted ad, and a logged-in advertiser is how private conversations turn into commercial data, even with the best intentions.

Why This Goes Beyond Tech

OpenAI’s rise to power is a story about what happens when we trust institutions that talk about values more than they live by them.

We’ve seen this pattern before. Companies start with moral authority using language about safety, service, and humanity. But over time, that promise is revealed to be a ruse used to build credibility and loyalty before they can focus on their real priorities.

And we keep falling for it because we want to believe. We want to believe the people building powerful technology are doing it from a genuine desire to do good. That hope is human and reasonable.

The answer isn’t to become cynical towards everything. Institutions need to be held to their stated values consistently, and without letting them off the hook.

OpenAI built something remarkable. But a company can be brilliant and ethically compromised at the same time. Those two things are not mutually exclusive.

If OpenAI wants to rebuild trust, the solution is simple: tell users about the lawsuit. Stop hiding monetization decisions behind word salad. Be honest about the fact that the mission has changed. Treat the people using your product like adults who deserve transparency, not an audience to be managed.

Until then, it’s okay to feel how you feel about it. In this case, skepticism isn’t paranoia. It’s pattern recognition.